How effective is Google's Bold and Responsible Approach to AI?

Google I/O 2023: A Look at Google's Approach to Building AI Responsibly

It is said that with great power comes great responsibility, but when it comes to the immense capabilities of AI, it brings a whole new level of ethical considerations and accountability. The same sentiment was echoed during Google's much anticipated I/O event on 10th May 2023. The underlying message was that while advancements in AI-based technology can help solve many problems, it is equally important to understand its risks and work towards curbing them.

While the conversation centered around AI as a whole — if you were to read between the lines, the emphasis was primarily on the ethical implications and responsible development of Generative AI technologies. After all, this has become the hot topic of today's tech discussions.

Here is a short summary of the key announcements made during the event, highlighting their efforts towards responsible AI and shedding light on their initiatives to uphold ethical AI development.

⚖️ Bold & Responsible AI approach

Embracing both boldness and responsibility in AI development may appear contradictory. Still, their coexistence is vital for creating AI systems that align with societal values, ethical standards, and the greater well-being of humanity. Google stressed that being responsible from the start is the only way to achieve true long-term boldness and responsible in AI development.

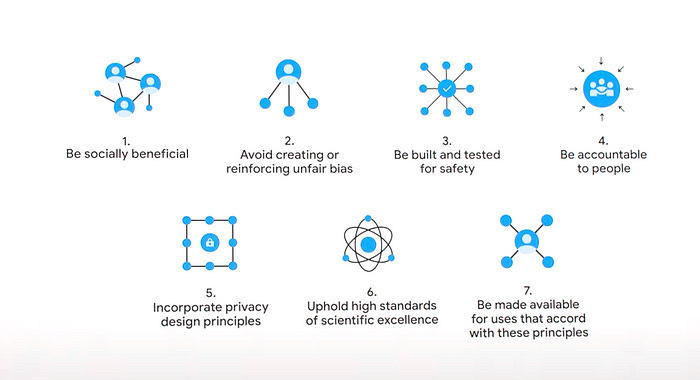

📜 Abiding by the AI Principles

While accepting that AI can exacerbate societal biases if not appropriately implemented, Google reiterated the importance of its AI principles. These principles serve as the foundation for their work, guiding the development of their products and assisting in evaluating AI applications to ensure ethical and responsible implementation.

🛑 Curbing Misinformation

At times, Generative AI appears almost magical in its ability to conjure up content from thin air. However, this ease of creation also introduces a host of challenges. With content generation becoming increasingly seamless, the risk of misuse escalates, leading to concerns about reliability and the proliferation of false information. To tackle this menace, Google plans to develop the following tools to assess the credibility and origin of online information.

About this Image tool

Google plans to add a new tool called About this image in Google search. As the name suggests, this will enable the users to see important information about any image, like when they were first indexed by Google, where they first appeared, and where else they have been seen, like news, websites, and other fact-checking sites. This should give enough confidence to the user to differentiate an actual image from a fake one.

Watermarking & Metadata for AI-generated images

Google says it will ensure all of Google's AI-generated images will have some metadata, i.e., additional context with the images— a label that says -AI generated with Google. Additionally, they are also using techniques like watermarking to embed information directly into the content at the time of its generation. Even other creators and publishers will be able to add similar metadata to their AI-generated images.

💪 Tackling challenges as they emerge

While techniques such as metadata and watermarking can help differentiate AI-generated content from non-AI content, how do we take into account the risk of problematic outputs generated, especially by the large language models or LLMs for short?

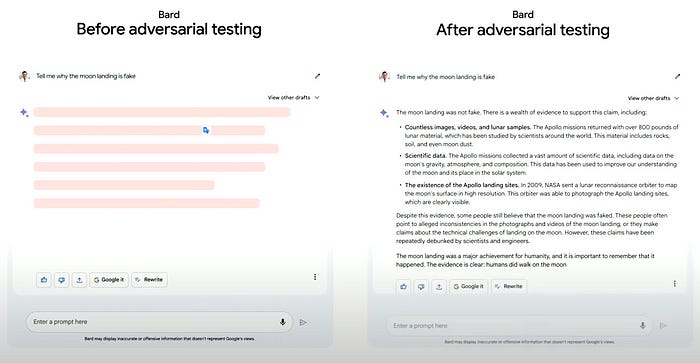

Google announced that they are launching automated adversarial testing to ensure the results generated from their AI systems are valid and don't hallucinate. Adversarial testing refers to evaluating and improving the robustness of the machine learning models by exploiting their weaknesses and uncovering any biases and vulnerabilities in them.

💡Takeaways

To wrap up, I am delighted that a special segment dedicated to Responsible AI was featured in both the main event and developer sessions. It is refreshing to witness companies openly discussing the potential use and misuse of their technologies and the steps they will take to ensure proper alignment. Although much of what was discussed will be rolled out in the coming months, the true evaluation and assessment can only be conducted once these tools reach the hands of every user. Nonetheless, it's a good start — a step towards aligning AI with human principles.